Camera traps have been a key part of the conservation toolkit for decades. Remotely triggered video or still cameras allow researchers and managers to monitor cryptic species, survey populations, and support enforcement responses by documenting illegal activities. Increasingly, machine learning is being implemented to automate the processing of data generated by camera traps.

A recent study published showed that, despite being well-established and widely used tools in conservation, progress in the development of camera traps has plateaued since the emergence of the modern model in the mid-2000s, leaving users struggling with many of the same issues they faced a decade ago. That manufacturer ratings have not improved over time, despite technological advancements, demonstrates the need for a new generation of innovative conservation camera traps. Join this group and explore existing efforts, established needs, and what next-generation camera traps might look like - including the integration of AI for data processing through initiatives like Wildlife Insights and Wild Me.

Group Highlights:

Our past Tech Tutors seasons featured multiple episodes for experienced and new camera trappers. How Do I Repair My Camera Traps? featured WILDLABS members Laure Joanny, Alistair Stewart, and Rob Appleby and featured many troubleshooting and DIY resources for common issues.

For camera trap users looking to incorporate machine learning into the data analysis process, Sara Beery's How do I get started using machine learning for my camera traps? is an incredible resource discussing the user-friendly tool MegaDetector.

And for those who are new to camera trapping, Marcella Kelly's How do I choose the right camera trap(s) based on interests, goals, and species? will help you make important decisions based on factors like species, environment, power, durability, and more.

Finally, for an in-depth conversation on camera trap hardware and software, check out the Camera Traps Virtual Meetup featuring Sara Beery, Roland Kays, and Sam Seccombe.

And while you're here, be sure to stop by the camera trap community's collaborative troubleshooting data bank, where we're compiling common problems with the goal of creating a consistent place to exchange tips and tricks!

Header photo: ACEAA-Conservacion Amazonica

University of Nottingham

I'm an artificial intelligence researcher (mostly in the area of neural networks) with a special interest in environmental problems.

- 0 Resources

- 0 Discussions

- 1 Groups

Georgia Institute of Technology

Grad Student

- 0 Resources

- 2 Discussions

- 7 Groups

- @APendael

- | Mr.

Amos Pendael is a renowned conservation and research specialist with extensive experience in the field of Management Oriented Monitoring Skills (MOMS), remote sensing, and Geographic Information Systems (GIS). With a M.Sc in Natural Resources Assessment and Management.

- 0 Resources

- 0 Discussions

- 6 Groups

- @Xavier_Mouy

- | he/him

I build software and hardware tools to help the analysis or the collection of passive acoustic data.

- 1 Resources

- 6 Discussions

- 6 Groups

- @Netty_Cheruto

- | She/her

- 23 Resources

- 49 Discussions

- 8 Groups

- @jcbotsch

- | he/him

I'm a population and community ecologist studying the effects of global change on insect populations.

- 0 Resources

- 0 Discussions

- 6 Groups

Natural Solutions

Engineer, Ph.D in Computation Ecology. Interested in developing tools for the massive acquisition of high dimensional data from new technologies (e.g., imaging, omics), their analysis and visualization.

- 0 Resources

- 0 Discussions

- 13 Groups

- @Gody

- | He

Godfrey Nyangaresi, a dedicated Protection Manager with 12+ years of wildlife conservation experience. Skilled in technologies, administration, and law enforcement, he leads protection efforts at STEP, ensuring the sustainable conservation of elephants in southern Tanzania.

- 0 Resources

- 3 Discussions

- 17 Groups

- @Mathilde

- | she/her

Natural Solutions

Engineer, I work for a web development company on web application projects for biodiversity conservation. I'm especially interested by camera traps, teledetection and DeepLearning subjects.

- 0 Resources

- 0 Discussions

- 11 Groups

- @SueWachia

- | she/her

A marine environmentalist with diverse experience in collaborating on multidisciplinary & community-centred marine research focused on: fisheries management, blue carbon strategies, seagrass ecosystems, & ocean literacy.

- 1 Resources

- 1 Discussions

- 7 Groups

Masters student at University of Jena/ Assistant in LEPMON Project at Dr. Gunnar Brehms Lab

- 0 Resources

- 0 Discussions

- 1 Groups

- @taruuppal

- | She/Her

Grant researcher and writer

- 0 Resources

- 0 Discussions

- 1 Groups

Acorn removal study of Nendo Dango, Ecological Restoration Research group at the University of Granada

5 June 2023

New paper in Methods in Ecology and Evolution

24 March 2023

💙 Exciting news from Appsilon! Our flagship project, Mbaza AI, is expanding its impact on nature and biodiversity conservation. We’ve teamed up with the 🦏 Ol Pejeta Conservancy to build a model for classifying images of...

16 March 2023

The Innovation in Practice edition of Methods in Ecology and Evolution is still seeking proposals about conservation technology

6 March 2023

New technology enabling the automated monitoring of moths has been put to rigorous testing in tropical conditions in Panama by an international team of researchers

22 February 2023

Technology to End the Sixth Mass Extinction. Salary: $104k-144K; Location: Washington DC or Seattle WA, potential hybrid; 5+ years of Full stack development experience; Deadline March 15th - view post for full job...

10 February 2023

Are you excited by the potential for new technologies to help monitor the natural world? Do you enjoy communicating your passion for technology and nature with diverse audiences? We are seeking an enthusiastic...

2 February 2023

Consultancy opportunity at ZSL for an experienced monitoring specialist to support species monitoring in rewilding landscapes across Europe

31 January 2023

Are you stuck on an AI or ML challenge in your conservation work? Apply now for the chance to receive tailored expert advice from data scientists! Applications due 27th January 2023

18 January 2023

WILDLABS and Fauna & Flora International are seeking an early career Vietnamese conservationist for 12-month paid internship position to grow and support the Southeast Asia regional community in our global...

11 January 2023

This position focuses on the ecology aspect of the project, while a second PhD in Ilmenau will be dealing with programming/AI development. Because of the high temporal resolution of our data, we can investigate how land...

9 January 2023

September 2024

October 2024

June 2020

October 2019

event

38 Products

Recently updated products

| Description | Activity | Replies | Groups | Updated |

|---|---|---|---|---|

| Hi there!, You should definitely check out VIAME, which includes a video annotation tool in addition to deep learning neural network training and deployment. It has a user... |

+3

|

Camera Traps | 5 months 3 weeks ago | |

| Featuring some of the very best spider video you'll ever see.. @JayStafstrom has been pushing the boundaries of camera technology,... |

|

Camera Traps, Sensors | 5 months 3 weeks ago | |

| Also, take a look at TrapTagger. It has integration with WildMe. |

+4

|

AI for Conservation, Camera Traps | 5 months 3 weeks ago | |

| camtrapR has a function that does what you want. i have not used it myself but it seems straightforward to use and it can run across directories of images:https://jniedballa.... |

+2

|

Camera Traps, Data management and processing tools, Open Source Solutions, Software and Mobile Apps | 6 months ago | |

| Thank you for the links, Robin. |

+7

|

Camera Traps | 6 months 2 weeks ago | |

| The two cameras you mention below tick off most of the items in your requirements list. I think the exception is the “timed start” whereby the camera would “wake up” to arm... |

|

Camera Traps | 6 months 2 weeks ago | |

| Hi Ben,I would be interested to see if the Instant Detect 2.0 camera system might be useful for this.The cameras can transmit thumbnails of the captured images using LoRa radio to... |

+4

|

Camera Traps | 6 months 2 weeks ago | |

| Hello Sam,What would you say would be the estimate cost was for the first version Instant Detect 1.0 ? That might help my research ? |

|

AI for Conservation, Camera Traps, Human-Wildlife Conflict, Sensors | 6 months 2 weeks ago | |

| Hi @GermanFore ,I work with the BearID Project on individual identification of brown bears from faces. More recently we worked on face detection across all bear species and ran... |

|

AI for Conservation, Camera Traps, Data management and processing tools, Software and Mobile Apps | 7 months ago | |

| Hi Jay! Thanks for posting this here as well as your great presentation in the Variety Hour the other day!Cheers! |

|

Camera Traps | 7 months 1 week ago | |

| For anyone interested: the GBIF guide Best Practices for Managing and Publishing Camera Trap Data is still open for review and feedback until next week. More info can be found in... |

|

Autonomous Camera Traps for Insects, Camera Traps | 7 months 2 weeks ago | |

| Hi Maddie,This camera has a very quick reaction time. |

|

Camera Traps | 7 months 2 weeks ago |

From the Field: Dr Raman Sukumar and Technology Developments Needed to Conserve Elephants

5 April 2017 12:00am

Camera Trap Pictures Wanted

6 March 2017 11:38am

31 March 2017 10:21am

Nice, thanks Colin! Are you deploying cameras as part of the phascogale project?

Paul - are you quite sure you don't need some camera trap photos of some gorgeous Australian mammals for your guidelines as well?

31 March 2017 10:28am

Steph,

Not quite. I'm coordinating a fauna monitoring project for a Landcare network and, of course, we hope to get phascogales. I've just retrieved a batch of cameras from nest box monitoring duty for the phascogale project and their next role will be to investigate a possible sighting of a Squirrel Glider in the area.

Colin

How to stop the thieves when all we want to capture is wildlife in action

Paul Meek

Paul Meek

23 March 2017 12:00am

Recommendations Needed: Real-time enabled camera traps

17 August 2016 2:56pm

9 March 2017 7:32am

Hi Kai,

thanks for the links. Interesting. I requested them for pricing details.

The Panthera Anti-Poaching Cam makes use of existing networks and costs about 350 USD. That is not very expensive, is it?

Cheers,

Jan Kees

9 March 2017 9:51am

Hi Jankees

Thanks for your reply,

If there have existing network like RoyalKPNN.V. provide mobile phone service, there will be no problem, 3G/4G camera traps will works well. each cam 250 USD at the moment.

Now days hundreds of chinese tech team are working on LoRa and NB-IoT solutions as well. All of them want to be successfull like HUAWEI,ZTE and DJI. Nice people, good team, team work ,working 12 hours each day like machine, like arms race.

I am sure they are willing to support anti-poaching. I am not techman,but we are chinese, we have the duty to solve problems, be responsible for it. If you come to china oneday, let me know.

Thanks, and your sensingclues is great.

Regards

Kai

14 March 2017 6:13pm

A number of good points have been made. In terms of remote-enable camera traps, you will mainly find one that use cellular data signals to transmit images. Typically these are thumbnails rather full resolution, so you will likely still need to retrieve teh emory cards for analysis. Also, traps that transmit images tend to have a lionger time lag between shots, which can be a problem. Hunting web sites tend to have the most complete reviews and discussions of the various models.

If you want to use citizen science in the data analysis process, I would suggest looking at Zooniverse (www.zooniverse.org). It has a pretty well-thought-out platform.

Survey: Camera trap effects on people

2 March 2017 4:25pm

5 March 2017 3:34pm

Thanks, i send the it to my chinese friends, i am sure some of them finished the survey.

cheers

Kai

#Tech4Wildlife Photo Challenge: Our favourites from 2016

1 March 2017 12:00am

Remote Camera Data Processing - Counting Individual Animals?

10 November 2016 12:30am

20 January 2017 12:04am

Hi Kate, It all depends on the specie and data set accuracy you are trying to collect. If you are purely documenting frequency of visits, this is straight forward. However, if you are recording numbers then further analysis of photos may be needed. Some animals like foxes have distinctive marks or shapes, whilst smaller mammals can be a lot harder. If this is impossible, then a time limit should be defined across all camera traps. From all the papers I have read and projects we have worked with, there doesn’t seem to be clear standard. Hope this helps. Mike - handykam

3 February 2017 2:00am

Hi Kate, this may be a bit late for your analysis but I will put here for future reference. The case you mention would be to detemine independent events, not necesarily different individuals. Unless there is conspicuous colour, pattern, size, sex or other scars/markings then you shouldn't label them as different individuals. I haven't found much analysis or discussion on this point so it seems prior experience or a "best-guess" is used to determine the time between independent events. Within the R package "camtrapR" vignette it is mentioned (https://cran.r-project.org/web/packages/camtrapR/vignettes/DataExploration.html) "The criterion for temporal independence between records ... will affect the results of the activity plots" and you may have other data to correlate activity with abundance or density.

If you are using and occupancy analysis this may be trivial as you will likely be lumping multiple nights of survey into a single detection period for each camera anyway (we have used six 10-day detection periods for cats in central Aus).

SECR methods may also be more useful for calculating density. Timing of events BETWEEN cameras can be used to show that photos are of different indivduals as they cannont exist in two places simultaneously or travel at fast enough rate to be captured in that time frame (clocks must be synchronised).

I hope you have had as good a season in Bon Bon as we have in Alice Springs. Cheers, Al

5 February 2017 8:58am

The facts are simple. If you violate the assumption of independence of sampling events you will bias the result. In the event of multiple observations by overestimating occupancy due to counting the same animal twice. There is therefore no answer to your problem. People try to overcome it by chosing a particular random number to try and standardise the method across sampling sessions. Let us say we decide to use one hour between new samples in all our sampling sessions. If abundance changes between sessions our ability to detect an animal may change and our result may therefore be biased up or down depending on the trend. So we allow detectability to vary between samples to try and reduce this bias. This is done through assessing the results of the replicate samples i.e. n days.

However, the session time determines how many counts are reduced to presence only and as session time increases the variance of the occupancy estimate may grow. But we don't know if this increasing variance/ loss of accuracy is based on a real loss of information or not because we don't know if we are correctly throwing away information portraying the same individual or information on the presence of multiple individuals. Therefore selection of session time may effect our ability to detect a change in occupancy even with accounting for varying detection. i.e. detection may be equal if our session time is too short. In other words if in both one hour AND two hours we detect multiple animals on the same number of plots, convert this to the binomial (presence/absence), then detectability will be equal for both sessions. But if in one hour we detect half the number of animals as in two hours what does this say about occupancy?

Spatially explict (SE) models will also not help because as far as I know they are based on allowing multiple detections across plots, not within plots. Or cameras in this case.

Having said this there is apparently some hope in marking a portion of individuals to increase the accuracy of SECR or SE unmarked in this case. So my only advice would be to run an experiment with marking some foxes and use a SE model with predominantly unmarked indiviudals.

On a more jovial note you could simply mine the data by assessing the power to detect a change between pre/post baiting using a variety of different session times. The reality of such a result is highly dubious but it might inspire more thought on the behaviour of this particular situation. Failing all this youy could run away and join the circus.

Discussion: Wildlife Institute of India to conduct first tiger estimation in nine countries

28 November 2016 1:55pm

28 November 2016 2:07pm

@wildtiger @Shashank+Srinivasan @NJayasinghe Do you have thoughts on this?

31 January 2017 7:05pm

What species monitoring protcols do you know of that explicitly focus on one species?

-John

Discussion: Opinion of TEAM network and Wildlife Insights

7 June 2016 5:36am

24 June 2016 6:08am

Thank you. I had not seen TRAPPER.

I had seen Snoopy, CameraBase, the Sanderson & Harris executables, and TEAM. And none of them had seemed suitable for the work that I was doing.

I will investigate TRAPPER further.

30 June 2016 4:38am

I like TRAPPERs video capability.

I am yet to test it out though.

13 December 2016 12:47am

Hi Heidi,

You might also consider becoming involved in the eMammal project (emammal.si.edu) that the Smithsonian has recently developed. It has tools for both uploading and coding camera trap images, with the bonus that they are ultimately archived and made available by the Smithsonian for future researchers. My opinion is that moving toward open-access data will make all of our efforts more valuable into the future. I'm using eMammal, but not one of the developers or associated with the Smithsonian.

Cheers,

Robert

Conservation Leadership Programme 2017 Award

21 November 2016 12:00am

Standardization Discussion: Nomenclature

5 October 2016 4:48am

31 October 2016 2:18pm

Camera trapping is the new kid on the block, and it is still talking like a 5-year old ! It will take some time, and some journal editors insisting on uniform and properly used terms before everyone can be sure what everyone else is talking about.

For what it's worth, here is what I understand when I read the terms you list;

Survey - the process of designing the study, putting out and servicing the camera traps, collating and analysing data and writing it up. So much much more than just the time that the camera traps were in the field.

Site - a spot on the ground. Usually these days specified as a GPS location. What you describe as a site I would call a study area.

Station - I do not recall having read this in connection with camera traps, and I would not use it. I admit that this is not consistent; I have read and I would use "bait station" or "feeding station" interchangebly with "baiting site" or "feeding site". It is of course possible to station a camera trap at a baiting site, or site a camera trap at a feeding station !!

Camera - is certainly a physical device that takes photos, but you cannot record animals with just a camera, you need someting to trigger it. A self-triggering camera is a camera trap (or trail camera, game camera etc).

A station session (see above about station) would be OK is site was substituted for station.

Peter

12 November 2016 2:39pm

Hi folks,

for Site - in our camera trap data base we use the mountain or National/Nature park

Survey - is connected to the time scale - i.e. This mountain2015 or 2016

Station is the actual place of the cameras (1 or 2) with GPS coordinates

Diana

Article and Discussion: Scale of camera trap studies

25 August 2016 11:50am

5 October 2016 3:37pm

@heidi.h Definitely a valid question. It looks like they have it connected to a zooniverse project, which means they are using citizen science to not only set up and maintain the cameras, but to process the data. Pretty neat.

What we do

Our main task is to get our trail camera volunteers up and running with equipment and training. Volunteers can now apply to host a camera in Wisconsin survey blocks where they have access to land (visit dnr.wi.gov, keyword "Snapshot Wisconsin" for more information). Trail camera volunteers are in charge of setting up a camera and retrieving its SD card (containing saved photos) at least four times per year. Volunteers then send the photos to WDNR to be posted on Zooniverse. By the end of 2017, we expect to have enough cameras for > 2000 volunteers to participate in the project -- these cameras will produce millions of photos each year!

While we get our trail camera volunteers set up, we have plenty of other photos to show the Zooniverse community. WDNR staff have placed over 300 trail cameras in two areas of the state now home to a species of elk (Cervus elaphus) formerly abundant throughout North America. Elk were extirpated from Wisconsin in the 1800s due to overhunting and habitat loss. Reintroduction efforts began in 1995 and continue today, and we're curious to know how the elk are doing! Classifying the photos from the elk reintroduction areas will give us great information about population size and distribution, and examine how elk presence overlaps with that of wolves--natural predators of elk.

5 October 2016 3:42pm

But in answer to your question, @P.Glover.Kapfer , I haven't heard of any near this large. TEAM Network would be the closest - 17 sites, 14 countries and approximately 1000 camera traps deployed over 2000km 2 that are monitored annually. @efegraus - does TEAM Network have ambitions to be expand to this sort of scale?

@ollie.wearn perhaps you might know of some other big scale camera trapping projects?

8 November 2016 1:15pm

Bringing over some of the comments we're getting on Twitter:

@WILDLABSNET Our @UAlberta lab deploys ARUs at 1000s of sites in Alberta each year in collaboration with @ABbiodiversity #bioacoustics

— Elly Knight (@ellycknight) November 7, 2016

@WILDLABSNET yeah, @TEAMNetworkOrg does that...pretty sure thousands of cameras across 15 or sites

— Asia Murphy (@am_anatiala) November 4, 2016

Neotropical Migratory Bird Conservation Act grants via USFWS

8 November 2016 12:00am

How many cameras in a camera trap?

4 July 2016 4:32am

11 July 2016 2:55pm

Hi

In some cases you need to recognize individuals, such as in capture-recapture analysis for abundance/density estimations, or you might want an index of individuals that are detected (instead of number of detection).

If you need to recognize individuals is better if you have both flanks of the animal photographed, and even better if the animal has natural marks (such as rosettes or stripes like jaguars or tigers), but you can also use scars and general body complexion to help.

You can still make a capture-recapture study with one camera trap, but you have to choose only pictures of one side of the animal to use, and discard all the other pictures of the other side (since you won't know if are the same animal), doing that you decrease the detection probability, because now it would be the product of the likelihood of the animal walking trough the camera and the likelihood of going in the direction that you need.

Using one camera per site is a good setup for a site occupancy study, and you can even estimate abundance with the Royle-Nichols method, if your data fits it.

I hope this helps

Bests

Juan

12 July 2016 7:42pm

We have just started testing an array of three cameras recording video at an African wild dog marking site. The idea is to give seamless coverage of an area larger than can be monitored by one camera, with maximum chance of detecting animals approaching from any direction. As a bonus it produces pictures of both sides. The cameras have only been out for a week, so it is too early to assess how well the arrangement works.

1 November 2016 1:38pm

We have a set of three Bushnells covering an African wild dog scent-marking site. They are at the apices of a triangle, with each camera just at the edge of the field of view of one of the others. This gives us a higher probability of detecting animals at the site, a better view of the action on at least one of the cameras, and no dead spots below each camera.

I was hoping to use two Reconyx Ultrafire XR6s on game trails at each of ten scent stations, but the cameras cannot detect animals walking towards or away from them.

Peter

Internet Cats Just Got Bigger

26 October 2016 12:00am

Perspectives from the World Ranger Congress

John Probert

John Probert

10 August 2016 12:00am

Resource: Wildlife Speed Cameras: Measuring animal travel speed and day range using camera traps

28 April 2016 2:39pm

13 June 2016 4:59pm

Here's a set of tools that could be applicable to this idea

https://github.com/pfr/VideoSpeedTracker

7 August 2016 11:15pm

Hi Steph - just to follow up on your post: @MarcusRowcliffe , James Durrant and I have been working on a bit of software to implement the "computer vision" techniques that are mentioned in that paragraph. You can see a demonstration of it in action here. It requires camera-trappers to "calibrate" their camera traps during setup (or take-down), by taking pictures of a standard object (for example, we use a 1m pole held vertically) at different distances. The calibration takes ~10 mins per location. From this, you can reconstruct the paths that animals take infront of cameras, the total distance they travelled, and therefore their speed.

[ARCHIVED EVENT]: Approaches to Analysing Camera Trap Data

18 April 2016 3:45pm

5 August 2016 9:54pm

Hi Steph,

Only just discovered this site, so I'm a bit late to the game.

I'd love to hear what your main take-aways were from this meeting!

Best,

Louise

5 August 2016 10:35pm

Hi Louise,

Welcome! Unfortunately, an uncomfortably busy calendar meant I ended up missing this gathering. However, I'm sure that @SteffenOppel @Tomswinfield or @ali+johnston (I think you were all involved?) might be kind enough to jump in here and share some of their key take aways from this discussion?

Steph

How can technology help us monitor those small cold-blooded critters that live in caves?

Tony Whitten

Tony Whitten

25 July 2016 12:00am

Discussion: 360° Camera for Arboreal Camera Trapping

19 July 2016 2:38pm

Camera traps reveal mysteries of nature

Roland Kays

Roland Kays

18 July 2016 12:00am

Discussion: Self-powered camera trap

24 November 2015 7:36pm

1 December 2015 6:27pm

I was initially thinking of dozens or maybe even hundreds of nodes coming back to a central wired connection point. I wonder if something like Google's project Loon could work in place of an on-the-ground network.

But stepping back from the tech for a moment, really the problem we're trying to solve here is being able to have remote monitoring cameras that don't need anyone to go out to change batteries or memory cards. After salaries, vehicles/transportation is the top expense at pretty much all of the conservation partners we have. Anything we can do to reduce the travel (and the time of the people as well) is huge.

1 December 2015 6:37pm

So, in terms of power, does the "classic" solution with a set of solar panel cells on top of the box have some major flaws? I'm pretty sure I've seen self-powered meteo stations looking like this positioned along motorways/higways (don't remember what country or even a continent was it :-) Not being an expert in photo-voltaics, I would risk saying that a purposely-designed cell pointing up, directly at the sun, will have better efficiency than a re-purposed CMOS censor :-)

And indeed, talking to something overhead (either the baloons or Facebook's drones - forgot the name - or maybe even satellites?) would be probably much simpler than ground-based communication. If we had big enough energy budget, the communication channel would be "relatively easy" to implement...

4 July 2016 11:51am

Some camera trap manufacturers offer solar power as an add-on or option off the shelf. I agree that using the image seems like a solution looking for a problem.

The Highs and Lows of Camera Traps for Rapid Inventories in the Rainforest Canopy

Andy Whitworth

Andy Whitworth

4 July 2016 12:00am

Wildlife Crime Tech Challenge Accelerator Bootcamp

Sophie Maxwell

Sophie Maxwell

24 June 2016 12:00am

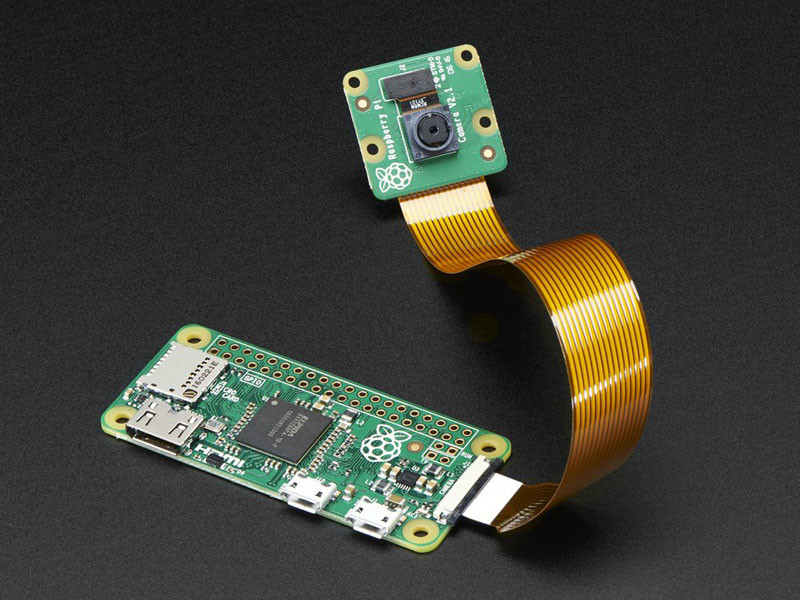

Project Feedback Wanted: Building Low Cost Cameras

2 March 2016 9:59am

11 May 2016 6:27pm

thanks for this very interesting post! i was also trying to develop an inexpensive camera trap but with not good results. i think the use of a PIR sensor can give more battery life than motion detection via software. Can you give more details about the components you used please?

Thanks

Paolo

20 May 2016 11:07pm

Hi Sorry been away, I'll list more about parts etc.. In the mean time the Pi Zero has just had an upgrade..

http://petapixel.com/2016/05/19/5-raspberry-pi-zero-now-camera-compatible/

TEAM Network and Wildlife Insights

Eric Fegraus

Eric Fegraus

28 April 2016 12:00am

Is Google’s Cloud Vision useful for identifying animals from camera-trap photos?

Aditya Gangadharan

Aditya Gangadharan

20 April 2016 12:00am

Disruptive Technology: Embracing the Transformative Impacts of Software on Society

10 March 2016 12:00am

[ARCHIVED]: Smithsonian Course: Camera Trapping Study Design and Data Analysis for Occupancy and Density Estimation

3 February 2016 2:25pm

Wildlife Crime Tech Challenge: Winners Announced!

22 January 2016 12:00am

31 March 2017 10:18am

Paul,

I can get some when I do my next deployment (sometime next week at this stage).

Colin