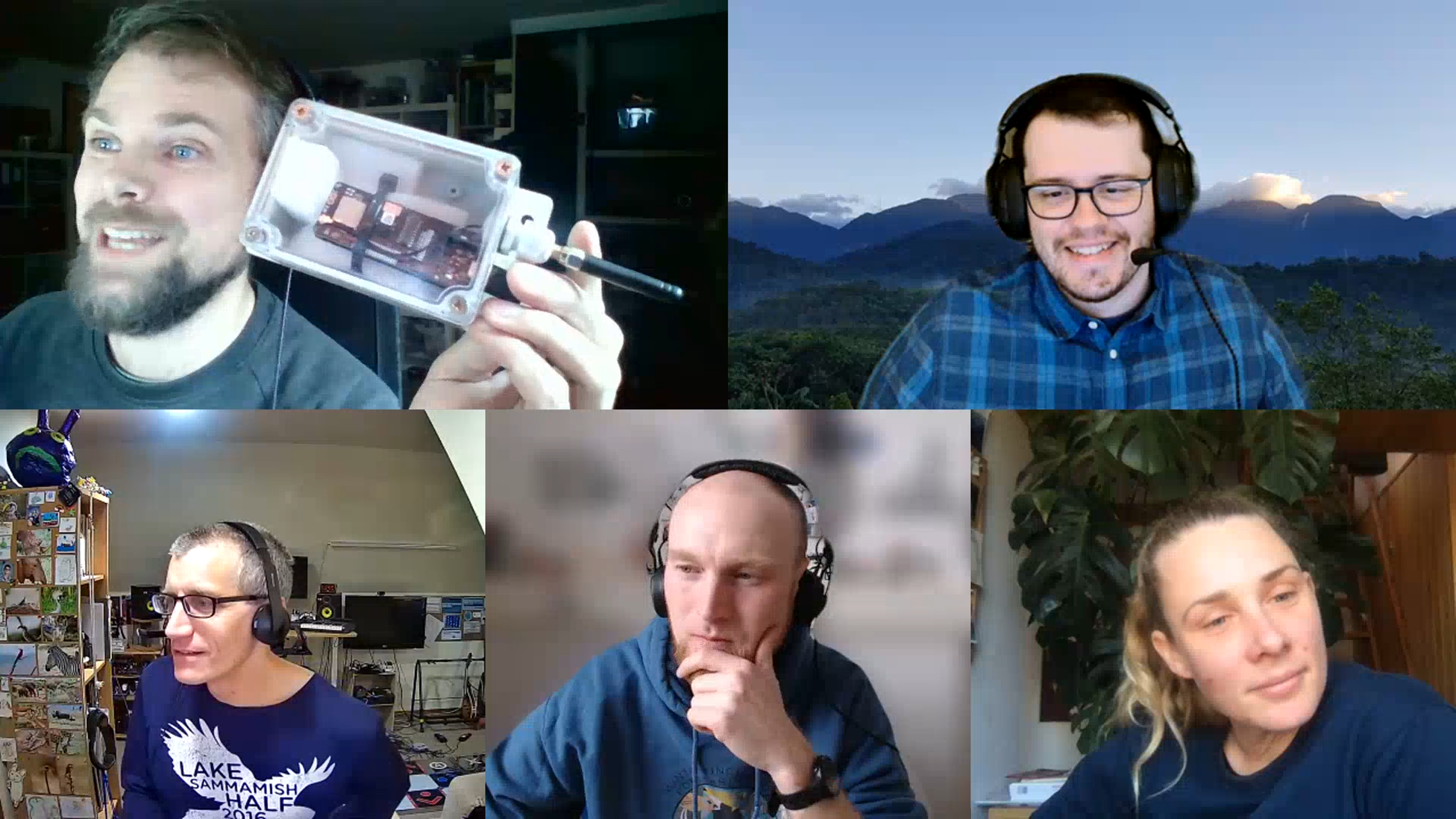

AI for Conservation Office Hours was first piloted back in 2021 by WILDLABS and Dan Morris, from Google's AI for Nature and Society program. Office Hours connects conservationists with AI specialists to provide direct support and advice on solving AI and ML problems in their conservation projects. This year we built on the lessons learned from the pilot programme to streamline the process, allowing us to help even more individuals with their issues, as well as establish some long-lasting professional connections that will continue to help them in their careers!

For AI for Conservation Office Hours 2023 we tried to reach as many conservationists as possible to offer one-on-one video calls. Throughout February and March, we were able to host 15 virtual sessions for conservationists from 12 countries, providing valuable 1:1 project advice from some of the top leaders in AI. We'd like to extend a huge thank you to experts Dan Morris, Tomer Gadot, Ștefan Istrate, Stefan Kahl and Tom Denton for sharing their knowledge and advice with participants!

The applications we received for this round of Office Hours showcased the exciting scope of our community members’ projects, with a particularly large number of applications coming from those working in the bioacoustics and camera trap/imaging fields. Along with allowing us to match participants with experts to receive bespoke advice, these applications are also incredibly valuable to the WILDLABS team, as they help us understand what our community is working on right now, what they need help with in order to be successful, and which areas are primed for innovation with AI.

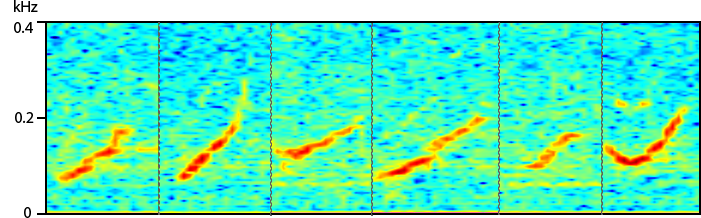

The bioacoustics projects we reviewed included topics such as automated call recognition for rare low-density species, neural networks for detection and identification of bird vocalisations, and the passive acoustic monitoring of turtle vocalisations.

In one project, Susan Parks and her team from Syracuse University sought advice on automated approaches to improve North Atlantic right whale density estimates from passive acoustic recordings. To be successful, their team needed guidance on how to standardise their data inputs for data classification, which unsupervised or supervised approaches would be most feasible, and which features would be the most robust when considering variable background noise prevalent in their recordings. Given the relatively small amount of labelled training data, Tom Denton suggested first trying to extract audio features with an off-the-shelf model that’s not specific to whales, then using an unsupervised clustering approach to try to pick out individual voices. The discussion touched on some specific packages that would work effectively with the tools the team is already using, e.g. the Matlab implementation of the DBSCAN algorithm.

Images: 1)Examples of variability of the same stereotyped contact call from different individuals. 2)Examples of a counter calling bout of ~3 different individuals.

Another set of bioacoustics questions came from Robin Sandfort, who is using acoustic recorders to survey forest dormouse populations in the alps. Robin was looking for guidance on how to deploy models on an edge device, as well as how to improve accuracy on a binary (dormouse/not-dormouse) model. Stefan and Dan suggested that before moving straight to edge AI, Robin scale his analysis up on a desktop environment first, to have a lightweight way of exploring different models, and to be able to get a “version 1” into his workflow as soon as possible. Stefan also suggested that even though Robin has a binary problem, sometimes adding more classes to the model - for example, adding separate classes for birds or human activity - actually makes the binary problem easier, by giving the model more information about the background categories.

Among the camera trap and imaging projects we helped with in this round of Office Hours were those focused on identifying bees, classifying deer, and evaluating the tradeoffs of edge vs. cloud-based AI. One project from Pakistan working on using camera traps to help mitigate human-wildlife conflict with snow leopards sought advice on improving the accuracy of eliminating blank images - especially blanks triggered by rain and snow - and classifying big cats. The expert team offered advice on trading image resolution against the number of transmitted images when bandwidth is constrained, best practices for minimizing AI false positives, and leveraging publicly available training data from similar species and ecosystems when data is scarce for the target species and locations.

This AI camera trap acts like an early warning system to detect and notify within a minute about the presence of a predator species, such as the snow leopard. Notifications via SMS along with a GIF image are sent to the relevant focal point to prevent attacks on livestock. pic.twitter.com/GC7KiCicd9

— WWF-Pakistan (@WWFPak) March 3, 2023

We also helped AI projects related to drones and mapping, such as Tojo Michael Rasolozaka’s project with WWF Madagascar looking at using artificial intelligence with drones for the measurement, monitoring, and counting of mangrove reforestation sites. Tojo and his team are using images from quadricopter and fixed wing drones to map the reforestation and restoration of degraded forests. They use the location, area and number of planted trees in a given reforestation area to see the evolution of reforestation and monitor the survival rate. This task, however, requires hours and hours of manually counting planted trees, so Tojo reached out to Office Hours for help automating the calculation of surface area and the counting of planted trees or nurseries, which would ensure the monitoring the plants’ health and make it easier to identify the existing species in a restoration site. Dan Morris suggested trying the open-source DeepForest model before embarking on a custom training process; in the course of discussing this option, Tojo and Dan were able to test this model and achieve good initial results, at which point Tojo was introduced to the model’s developer to discuss best practices and fine-tuning.

Thanks to the AI for conservation office hour sessions and the orientation obtained from a specialist during the discussion session, I now have a serious lead for the use of artificial intelligence with drones in the framework of mangrove restoration monitoring. It is the same for the implementation of a machine learning open source to accelerate the creation of orthophoto at very high resolution with the images captured by drone. The first tests and results using the tools proposed by the specialists are very promising.

Tojo Michael Rasolozaka

Where a 1:1 session wasn’t possible or practical for meeting specific project needs, Dan also provided in-depth advice and guidance via email to 6 additional conservationists, connecting them with other experts who could help and pointing them to useful software or hardware solutions suited to their projects and issues. The projects aided by this portion of the Office Hours programme included classifying species of insects through camera trap data, using language models to tokenize news newspaper stories on human-elephant conflict, on the edge computation to identify feral cats for real-time alerts, and counting prairie dog burrows in Google Earth Engine.

In March we also held a group drop-in session for conservationists who were just starting out with AI in their projects. Once again, our cohort of AI experts joined us to discuss AI and ML in general and offer more advice for those who stopped by. In this session, we heard about some very interesting work like using AI language models to extract information about Amazon rainforest judicial case information from PDFs, and exploring the playback of animal vocalisations.

While this whole programme was designed to provide advice to conservationists, Dan and the WILDLABS team are learning just as much about the incredible, diverse, and innovative work going on all around the world in our global conservation tech community. We hope our participants take away the knowledge they need to confidently use AI in their current and future projects, but just as importantly, we hope to translate what we’re learning from all of you into programs that meet your needs and help you develop the conservation tech skills you value the most. We are excited to host more Office Hours programmes just like this in the future, so keep an eye out for more announcements later this year!

Reading papers can only tell us what what the conservation technology community is already doing, and what tools they're already using: these office hours offer an amazing insight into what ecologists are trying to do, and why some tools aren't working for them, which helps guide the technology we develop and the resources we provide to get the most out of that technology.

Dan Morris

Once again, we would like to thank all our AI experts for their time and effort in making this whole programme possible. To all the conservationists who took part in this year’s AI for Conservation Office Hours, we would love to hear more about how the sessions helped your project in the comments below!

Thank you, and we’ll see you next time in Office Hours!

Header image: Lukas Picek | Detection, identification and monitoring of animals by advanced computer vision methods project

Add the first post in this thread.