Autonomous camera traps for insects provide a tool for long-term remote monitoring of insects. These systems bring together cameras, computer vision, and autonomous infrastructure such as solar panels, mini computers, and data telemetry to collect images of insects.

With increasing recognition of the importance of insects as the dominant component of almost all ecosystems, there are growing concerns that insect biodiversity has declined globally, with serious consequences for the ecosystem services on which we all depend.

Automated camera traps for insects offer one of the best practical and cost-effective solutions for more standardised monitoring of insects across the globe. However, to realise this we need interdisciplinary teams who can work together to develop the hardware systems, AI components, metadata standards, data analysis, and much more.

This WILDLABS group has been set up by people from around the world who have individually been tackling parts of this challenge and who believe we can do more by working together.

We hope you will become part of this group where we share our knowledge and expertise to advance this technology.

Check out Tom's Variety Hour talk for an introduction to this group.

Learn about Autonomous Camera Traps for Insects by checking out recordings of our webinar series:

- Hardware design of camera traps for moth monitoring

- Assessing the effectiveness of these autonomous systems in real-world settings, and comparing results with traditional monitoring methods

- Designing machine learning tools to process camera trap data automatically

- Developing automated camera systems for monitoring pollinators

- India-focused projects on insect monitoring

Meet the rest of the group and introduce yourself on our welcome thread - https://www.wildlabs.net/discussion/welcome-autonomous-camera-traps-insects-group

Group curators

- @tom_august

- | he/him

Computational ecologist with interests in computer vision, citizen science, open science, drones, acoustics, data viz, software engineering, public engagement

- 5 Resources

- 49 Discussions

- 5 Groups

- 7 Resources

- 2 Discussions

- 5 Groups

June 2024

event

December 2023

event

| Description | Activity | Replies | Groups | Updated |

|---|---|---|---|---|

| Hey there @JakeBurton , sorry, I did not see your message! Why don't you shoot me an email with some tentative availability to luca.pegoraro (at) wsl.ch, and we take it from... |

|

Autonomous Camera Traps for Insects | 1 day 6 hours ago | |

| Very useful! Thanks a lot! |

|

Autonomous Camera Traps for Insects | 3 weeks ago | |

| Gotcha, well I look forward to seeing future iterations and following along with your progress!! |

|

Autonomous Camera Traps for Insects, AI for Conservation, Emerging Tech, Open Source Solutions | 4 weeks ago | |

| More cool things surrounding the Mothbox project keep happening! Here’s a recap of cool developments over the past month!New Teammate! Bri... |

|

Autonomous Camera Traps for Insects | 4 weeks 1 day ago | |

| Greetings Everyone, We are so excited to share details of our WILDLABS AWARDS project "Enhancing Pollinator Conservation through Deep... |

|

AI for Conservation, Autonomous Camera Traps for Insects | 1 month 1 week ago | |

| For our [mothbox project](https://forum.openhardware.science/c/projects/mothbox/73) we are programming pijuices and pis to automatically... |

|

Autonomous Camera Traps for Insects | 2 months ago | |

| Hi, I made a little utility script that folks here might find useful (or might have MUCH BETTER VERSIONS OF! and if so, let me know!) ... |

|

Autonomous Camera Traps for Insects | 2 months 2 weeks ago | |

| We did some more testing with the Mothbeam in the forest. It's the height of dry season right now, so not many moths came out, but the mothbeam shined super bright and attracted a... |

|

Autonomous Camera Traps for Insects | 3 months ago | |

| Hi Danilo, yes just in time ;-) |

|

Camera Traps, Autonomous Camera Traps for Insects | 3 months 2 weeks ago | |

| Yep, here:Currently it only installs on older Jetsons as in the coming weeks I’ll finish the install code for current jetsons.Technically speaking, if you were an IT specialist... |

|

Autonomous Camera Traps for Insects, Camera Traps | 4 months ago | |

| Great work! I very much look forward to trying out the MothBeam light. That's going to be a huge help in making moth monitoring more accessible.And well done digging into the... |

|

Autonomous Camera Traps for Insects | 4 months ago | |

| The preprint to our camera trap paper is now available at bioRxiv. |

+23

|

Autonomous Camera Traps for Insects | 5 months 1 week ago |

Share Your Work in a Conservation Technology Video

17 May 2024 9:06pm

Insect cameras in The Inventory

1 May 2024 9:26pm

3 May 2024 2:13pm

Thanks very much @LucaPego! Just to clarify, 'Products' does cover hardware, but it also includes software and databases as well. Basically anything that you can take and use in your work. Sounds like we should also be including models in that as well!

It would be great to see more databases and software listed and reviewed on there. We would also be interested to learn more about your internal efforts in your community and what overlap there is for sure. Could we set up a meeting?

16 May 2024 3:16pm

Hey there @JakeBurton , sorry, I did not see your message!

Why don't you shoot me an email with some tentative availability to luca.pegoraro (at) wsl.ch, and we take it from there?

Again sorry for the delay!

Travel grants for insect monitoring an AI

3 May 2024 5:20pm

The Inventory User Guide

1 May 2024 12:46pm

Introducing The Inventory!

1 May 2024 12:46pm

2 May 2024 3:08pm

3 May 2024 5:33pm

17 May 2024 7:29am

Auto-Wake a Pi5 without a Pijuice! (Mothbox)

25 April 2024 5:44pm

26 April 2024 9:51am

Very useful! Thanks a lot!

Postdoc: Biologging & Camera Trap Data Integration

22 April 2024 10:10pm

WILDLABS AWARDS 2024 – MothBox

15 April 2024 5:06am

18 April 2024 10:39am

Already an update from @hikinghack:

19 April 2024 12:00pm

Yeah we got it about as bare bones as possible for this level of photo resolution and duration in the field. The main costs right now are:

Pi- $80

Pijuice -$75

Battery - $85

64mp Camera - $60

which lands us at $300 already. But we might be able to eliminate that pijuice and have fewer moving parts, and cut 1/4 of our costs! Compared to something like just a single logitech brio camera that sells for $200 and only gets us like 16mp, we are able to make this thing as cheap as we could figure out! :)

19 April 2024 12:54pm

Gotcha, well I look forward to seeing future iterations and following along with your progress!!

Mothbox v3.2 Updates - Solar, HDR, Wifi Hotspots, and More!

18 April 2024 4:58am

WILDLABS AWARDS 2024 - Enhancing Pollinator Conservation through Deep NeuralNetwork Development

7 April 2024 5:55pm

Useful Script to easily program the Pijuice and Schedule Raspberry Pi happenings

17 March 2024 3:10am

Useful Pi Script for Backing Up

1 March 2024 6:34pm

Mothbox + Mothbeam Update: 4

31 January 2024 7:09pm

15 February 2024 4:49pm

We did some more testing with the Mothbeam in the forest. It's the height of dry season right now, so not many moths came out, but the mothbeam shined super bright and attracted a whole bunch of really tiny things that swarmed a lot

and some nocturnal bees

you could also see the mothbeam's aura from far away in the forest! so that was impressive!

I also tested out attaching a 12V USB booster cable to the Mothbeam, and it works

nice! So you can attach regular USB 5V battery packs to the mothbeam as well!

Computer Vision for Ecology Workshop 2025 Call for Applications

12 February 2024 9:29pm

Post-doc possition - Field spanning movement ecology, ecology of fear, bio-logging science, behavioral ecology, and ecological statistics

10 February 2024 7:20am

Underwater camera trap - call for early users

13 December 2023 11:44pm

23 January 2024 1:21pm

Many thanks for your contribution to the survey! We are now summarizing the list of early users and making our best to propose a newtcam to all in due time.

All the best!

Xavier

30 January 2024 10:20pm

Is there still time to apply?

31 January 2024 12:12pm

Hi Danilo, yes just in time ;-)

Computational Entomology Webinar II: Automated Pollinator Monitoring

23 January 2024 12:44pm

1st Joint International Scientific Conference

23 January 2024 8:34am

Testing Raspberry Pi cameras: Results

5 May 2023 5:11pm

5 September 2023 8:16am

And finally for now, the object detectors are wrapped by a python websocket network wrapper to make it easy for the system to use different types of object detectors. Usually, it's about 1/2 a day for me to write a new python wrapper for a new object detector type. You just need to wrap in the network connection and make it conform to the yolo way of expressing the hits, i.e. the json format that yolo outputs with bounding boxes, class names and confidence level.

What's more, you can even use multiple object detector models in different parts of a single captured image and you can cascade the logic to require multiple object detectors to match for example, or a choice from different object detectors.

It's the perfect anti-poaching system (If I say so myself :) )

10 January 2024 11:47pm

Hey @kimhendrikse , thanks for all these details. I just caught up. I like your approach of supporting multiple object detectors and using the python websockets wrapper! Is your code available somewhere?

11 January 2024 6:02am

Yep, here:

Currently it only installs on older Jetsons as in the coming weeks I’ll finish the install code for current jetsons.

Technically speaking, if you were an IT specialist you could even make it work in wsl2 Ubuntu on windows, but I haven’t published instructions for that. If you were even more of a specialist you wouldn’t need wsl2 either. One day I’ll publish instructions for that once I’ve done it. Though it would be slow unless the windows machine had an NVidia GPU and you PyTorch work with it.

Update 3: Cheap Automated Mothbox

31 December 2023 9:37pm

1 January 2024 9:18pm

This looks amazing! I'm currently work with hastatus bats up in Bocas, it would be really interesting to utilize some of these near foraging sites. Be sure to post again when you post the final documentation on github!

Also, Gamboa......dang I miss that little slice of heaven...

Super cool work Andrew!

Best,

Travis

5 January 2024 8:56pm

Thanks!!!

10 January 2024 11:33pm

Great work! I very much look forward to trying out the MothBeam light. That's going to be a huge help in making moth monitoring more accessible.

And well done digging into the picamera2 library to reduce the amount of time the light needs to be on while taking a photo. That is a super annoying issue!

Project support officer - Conservation Tech

11 December 2023 10:24pm

Project introductions and updates

2 August 2022 11:21am

29 March 2023 6:05pm

Hi all! I'm part of a Pollinator Monitoring Program at California State University, San Marcos which was started by a colleague lecturer of mine who was interested in learning more about the efficacy of pollinator gardens. It grew to include comparing local natural habitat of the Coastal Sage Scrub and I was initially brought on board to assist with data analysis, data management, etc. We then pivoted to the idea of using camera traps and AI for insect detection in place of the in-person monitoring approach (for increasing data and adding a cool tech angle to the effort, given it is of interest to local community partners that have pollinator gardens).

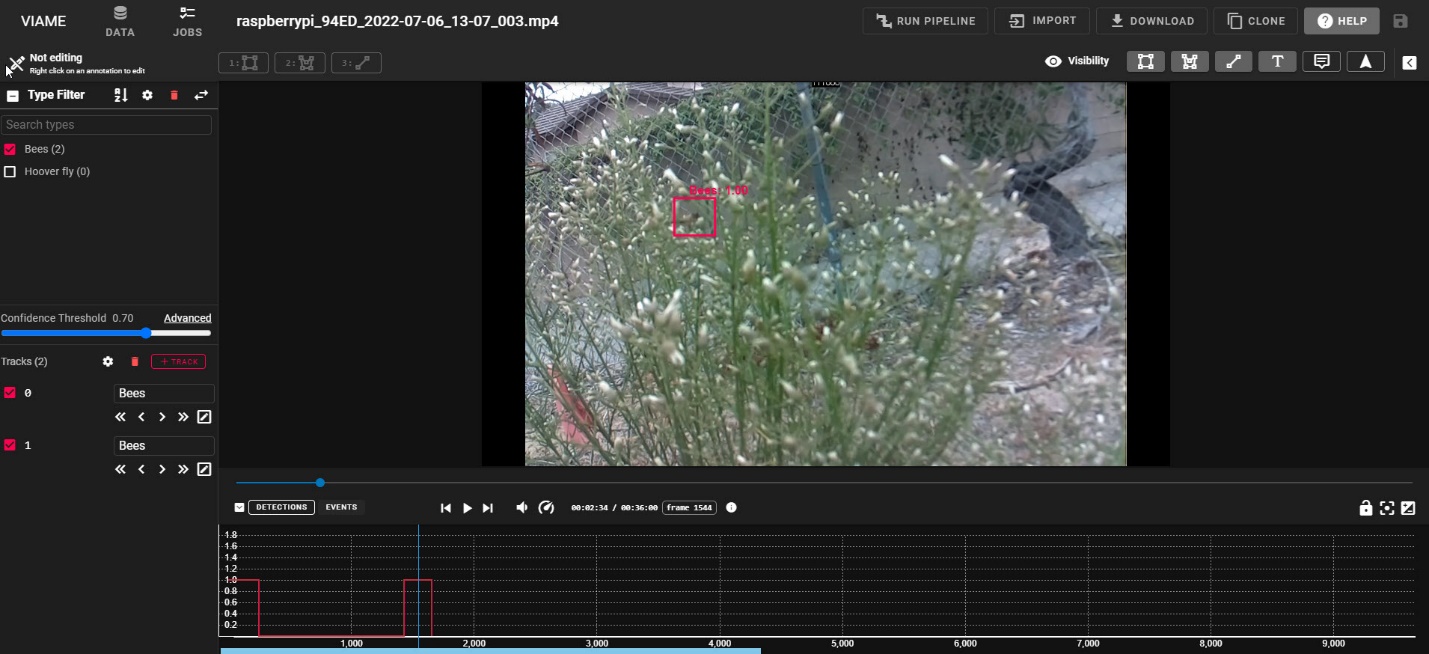

The group heavily involves students as researchers, and they are instrumental to the projects. We have settled on a combination of video footage and development of deep neural networks using the cloud-hosted video track detection tool, VIAME (developed by Kitware for NOAA Fisheries originally for fish track detection). Students built our first two PICTs (low-cost camera traps), and annotated the data from our pilot study that we are currently starting the process of network development for. Here's a cool pic of the easy-to-use interface that students use when annotating data:

Figure 1: VIAME software demonstrating annotation of the track of an insect in the video (red box). Annotations are done manually to develop a neural network for the automated processing.

The goal of the group's camera trap team is develop a neural network that can track insect pollinators associated with a wide variety of plants, and to use this information to collect large datasets to better understand the pollinator occurrence and activities with local habitats. This ultimately relates to native habitat health and can be used for long-term tracking of changes in the ecosystem, with the idea that knowledge of pollinators may inform resources and conservation managers, as well as local organizations in their land use practices. We ultimately are interested in working with the Kitware folks further to not only develop a robust network (and share broadly of course!), but also to customize the data extraction from automated tracks to include automated species/species group identification and information on interaction rate by those pollinators. We would love any suggestions for appropriate proposals to apply to, as well as any information/suggestions regarding the PICT camera or suggestions on methods. We are looking to include night time data collection at some point as well and are aware the near infrared is advised, but would appreciate any thoughts/advice on that avenue as well.

We will of course post when we have more results and look forward to hearing more about all the interesting projects happening in this space!

Cheers,

Liz Ferguson

5 April 2023 10:00pm

HI, indeed as Tom mentioned, I am working here in Vermont on moth monitoring using machines with Tom and others. We have a network going from here into Canada with others. Would love to catch up with you soon. I am away until late April, but would love to connect after that!

10 December 2023 5:10pm

The preprint to our camera trap paper is now available at bioRxiv.

Two-year postdoc in AI and remote sensing for citizen-science pollinator monitoring

4 December 2023 12:21pm

AWMS Conference 2023

Update 2: Cheap Automated Mothbox

23 October 2023 8:32pm

24 October 2023 9:05am

Hi Andrew,

thanks for sharing your development process so openly, that's really cool and boosts creative thinking also for the readers! :)

Regarding a solution for Raspberry Pi power management: we are using the PiJuice Zero pHAT in combination with a Raspberry Pi Zero 2 W in our insect camera trap. There are also other versions available, e.g. for RPi 4 (more info: PiJuice GitHub). From my experience the PiJuice works mostly great and is super easy to install and set up. Downsides are the price and the lack of further software updates/development. It would be really interesting if you could compare one of their HATs to the products from Waveshare. Another possible solution would be a product from UUGear. I have the Witty Pi 4 L3V7 lying around, but couldn't really test and compare it to the PiJuice HAT yet.

Is there a reason why you are using the Raspberry Pi 4? From what I understand about your use case, the RPi Zero 2 W or even RPi Zero should give enough computing power and require a lot less power. Also they are smaller and would be easier to integrate in your box (and generate less heat).

I'm excited for the next updates to see in which direction you will be moving forward with your Mothbox!

Best,

Max

25 October 2023 6:21pm

Thanks for these tips!

We are using a RPI4 because the people I am building it for want images from the 64MP camera from Arducam and so we have to use that to make that work.

27 October 2023 6:44am

Thanks a lot for this detailed update on your project! It looks great!

Entomological Research Specialist for Automated Insect Monitoring

25 October 2023 7:21pm

Cheap Automated Mothbox

31 August 2023 10:19pm

25 October 2023 5:04pm

I'm looking into writing a sketch for the esp32-cam that can detect pixel changes and take a photo, wish me luck.

25 October 2023 5:11pm

One question, does it even need motion detection? What about taking a photo every 5 seconds and sorting the photos afterwards?

25 October 2023 6:23pm

It depends on which scientists you talk to. I am an favor of just doing a timelapse and doing a post-processing sort afterwards. There's not much reason i can see for such motion fidelity. For the box i am making we are doing exactly that, though maybe a photo every minute or so

Metadata standards for Automated Insect Camera Traps

24 November 2022 9:49am

2 December 2022 3:58pm

Yes. I think this is really the way to go!

6 July 2023 4:48am

Here is another metadata initiative to be aware of. OGC has been developing a standard for describing training datasets for AI/ML image recognition and labeling. The review phase is over and it will become a new standard in the next few weeks. We should consider its adoption when we develop our own training image collections.

24 October 2023 9:12am

For anyone interested: the GBIF guide Best Practices for Managing and Publishing Camera Trap Data is still open for review and feedback until next week. More info can be found in their news post.

Best,

Max

Automated moth monitoring & you!

24 October 2023 8:52am

Catch up with The Variety Hour: October 2023

19 October 2023 11:59am

2 May 2024 2:02pm

Hey @JakeBurton , nice work with The Inventory!

This is very aligned with some internal efforts going on in our community to map out devices, but also data and models eventually. It would be great if we find a way to make this two interoperable. We are still in the (very) alpha development phases, so it is quite open, but it would be super helpful to have some insight about the under-the-hood data structure that The Inventory is relying on, so we can figure out good ways to reliably map or info onto them.

Happy to chat further or set up a meeting!

@qgeissmann👀