Camera traps have been a key part of the conservation toolkit for decades. Remotely triggered video or still cameras allow researchers and managers to monitor cryptic species, survey populations, and support enforcement responses by documenting illegal activities. Increasingly, machine learning is being implemented to automate the processing of data generated by camera traps.

A recent study published showed that, despite being well-established and widely used tools in conservation, progress in the development of camera traps has plateaued since the emergence of the modern model in the mid-2000s, leaving users struggling with many of the same issues they faced a decade ago. That manufacturer ratings have not improved over time, despite technological advancements, demonstrates the need for a new generation of innovative conservation camera traps. Join this group and explore existing efforts, established needs, and what next-generation camera traps might look like - including the integration of AI for data processing through initiatives like Wildlife Insights and Wild Me.

Group Highlights:

Our past Tech Tutors seasons featured multiple episodes for experienced and new camera trappers. How Do I Repair My Camera Traps? featured WILDLABS members Laure Joanny, Alistair Stewart, and Rob Appleby and featured many troubleshooting and DIY resources for common issues.

For camera trap users looking to incorporate machine learning into the data analysis process, Sara Beery's How do I get started using machine learning for my camera traps? is an incredible resource discussing the user-friendly tool MegaDetector.

And for those who are new to camera trapping, Marcella Kelly's How do I choose the right camera trap(s) based on interests, goals, and species? will help you make important decisions based on factors like species, environment, power, durability, and more.

Finally, for an in-depth conversation on camera trap hardware and software, check out the Camera Traps Virtual Meetup featuring Sara Beery, Roland Kays, and Sam Seccombe.

And while you're here, be sure to stop by the camera trap community's collaborative troubleshooting data bank, where we're compiling common problems with the goal of creating a consistent place to exchange tips and tricks!

Header photo: ACEAA-Conservacion Amazonica

- @bluevalhalla

- | he/him

BearID Project & Arm

Developing AI and IoT for wildlife

- 0 Resources

- 27 Discussions

- 6 Groups

- @Frank_van_der_Most

- | He, him

FileMaker developer with a passion for nature and an interest in funding and finance

- 11 Resources

- 83 Discussions

- 7 Groups

British Antarctic Survey (BAS)

Machine learning researcher at the British Antarctic Survey

- 0 Resources

- 0 Discussions

- 5 Groups

- @HollyCormack

- | she/her

Biodiversity Knowledge Management Intern at the Biodiversity Consultancy Ltd

- 6 Resources

- 5 Discussions

- 6 Groups

- @capreolus

- | he/him

Capreolus e.U.

wildlife biologist with capreolus.at

- 1 Resources

- 71 Discussions

- 16 Groups

- @affiliatepurna

- | general email id

Purna Man Shrestha is a program coordinator of mountain spirit, Nepal. He has 6 years of working experience in human wildlife interaction focusing on wildcats, and conflicts. He has a good knowledge on wildlife survey techniques, field survey, stakeholder engagement

- 0 Resources

- 0 Discussions

- 11 Groups

- @csugarte

- | Miss

I am a PhD student working on human-carnivore conflict and coexistence

- 0 Resources

- 1 Discussions

- 3 Groups

- @Agripina

- | Miss

Frankfurt Zoological Society

As a wildlife conservationist, I am deeply committed to nature conservation, community empowerment, and wildlife research in Tanzania. I've actively engaged in community-based projects, passionately advocating for integrating local communities into conservation.

- 0 Resources

- 4 Discussions

- 6 Groups

- 0 Resources

- 1 Discussions

- 9 Groups

DIY electronics for behavioral field biology

- 1 Resources

- 56 Discussions

- 4 Groups

WILDLABS & Fauna & Flora

I'm the Platform and Community Support Project Officer at WILDLABS! Speak to me if you have any inquiries about using the WILDLABS Platform or AI for Conservation: Office Hours.

- 18 Resources

- 26 Discussions

- 6 Groups

Wildlife Conservationist and Socio-ecology Researcher.

Passionate about preserving biodiversity in their natural homes, and studying the interactions between social systems and ecological environment

- 0 Resources

- 0 Discussions

- 4 Groups

National Geographic is offering funding up to up to $50,000 for conservationists conducting research on how the pandemic has impacted wildlife and conservation work. If you are interested in researching aspects of the...

10 March 2021

Article

WildID is excited to share their new camera trap processing and detection tools with WILDLABS! Using machine learning to identify Southern African wildlife species in large quantities of camera trap data, WildID's tool...

8 March 2021

Based on one of the largest camera trap surveys ever attemped, Internet of Elephants' new mobile game Unseen Empire draws on the real field experiences and camera trap data sets of a single decade-long survey, giving...

8 March 2021

Article

Today we're celebrating the #Tech4Wildlife Photo Challenge by shining a spotlight on one of our favorite WILDLABS collaboration success stories: the BoomBox! This collaboration between Dr. Meredith Palmer, Jacinta ...

26 February 2021

Last year, Tim van Deursen and Thijs Suijten shared their new "Hack the Poacher" system with us, presenting a unique way to detect poachers in real-time within protected national parks. Read on to learn about their next...

29 January 2021

In this case study from WWF's Northern Great Plains Program, Black-footed Ferret Restoration Manager Kristy Bly discusses how infrared FLIR cameras help teams detect and monitor the highly endangered black-footed...

19 January 2021

WILDLABS member Meredith Palmer shared this great course module based around Snapshot Serengeti camera trap data, and developed for university biology courses. This material is ideal for introductory level biology...

25 November 2020

To celebrate the first Black Mammalogists Week (starting Sunday, September 13th), we talked to four of the amazing Black scientists behind this event! Find out what they had to say about their favorite (and most...

10 September 2020

Today, WWF conservation engineering intern Ashley Rosen shares insight into the process of redesigning a camera mount for FLIR thermal cameras used by rangers in the fight against poaching. Ashley's design will become a...

24 August 2020

Our first season of Tech Tutors may have wrapped, but the connections and collaborations from these episodes are still going strong! Today, we're sharing Tech Tutor presenter Laure Joanny's recap of the most important...

20 August 2020

Since 2016, ZSL’s Instant Detect team have been working on improving metal detecting sensors for anti-poaching. The team believe that using metal detecting sensors will provide a highly targeted detection of potential...

10 August 2020

In this case study from herpetologist Emily Taylor, we learn about the best methods and gear used to track snakes, lizards, and other reptiles and amphibians via radio-telemetry, and how these techniques have changed...

31 July 2020

September 2024

October 2024

May 2024

event

April 2024

event

38 Products

Recently updated products

| Description | Activity | Replies | Groups | Updated |

|---|---|---|---|---|

| Hi there!, You should definitely check out VIAME, which includes a video annotation tool in addition to deep learning neural network training and deployment. It has a user... |

+3

|

Camera Traps | 5 months 3 weeks ago | |

| Featuring some of the very best spider video you'll ever see.. @JayStafstrom has been pushing the boundaries of camera technology,... |

|

Camera Traps, Sensors | 5 months 3 weeks ago | |

| Also, take a look at TrapTagger. It has integration with WildMe. |

+4

|

AI for Conservation, Camera Traps | 5 months 3 weeks ago | |

| camtrapR has a function that does what you want. i have not used it myself but it seems straightforward to use and it can run across directories of images:https://jniedballa.... |

+2

|

Camera Traps, Data management and processing tools, Open Source Solutions, Software and Mobile Apps | 6 months ago | |

| Thank you for the links, Robin. |

+7

|

Camera Traps | 6 months 2 weeks ago | |

| The two cameras you mention below tick off most of the items in your requirements list. I think the exception is the “timed start” whereby the camera would “wake up” to arm... |

|

Camera Traps | 6 months 2 weeks ago | |

| Hi Ben,I would be interested to see if the Instant Detect 2.0 camera system might be useful for this.The cameras can transmit thumbnails of the captured images using LoRa radio to... |

+4

|

Camera Traps | 6 months 2 weeks ago | |

| Hello Sam,What would you say would be the estimate cost was for the first version Instant Detect 1.0 ? That might help my research ? |

|

AI for Conservation, Camera Traps, Human-Wildlife Conflict, Sensors | 6 months 2 weeks ago | |

| Hi @GermanFore ,I work with the BearID Project on individual identification of brown bears from faces. More recently we worked on face detection across all bear species and ran... |

|

AI for Conservation, Camera Traps, Data management and processing tools, Software and Mobile Apps | 7 months ago | |

| Hi Jay! Thanks for posting this here as well as your great presentation in the Variety Hour the other day!Cheers! |

|

Camera Traps | 7 months 1 week ago | |

| For anyone interested: the GBIF guide Best Practices for Managing and Publishing Camera Trap Data is still open for review and feedback until next week. More info can be found in... |

|

Autonomous Camera Traps for Insects, Camera Traps | 7 months 2 weeks ago | |

| Hi Maddie,This camera has a very quick reaction time. |

|

Camera Traps | 7 months 2 weeks ago |

Integrating AI models with camera trap management applications

25 March 2024 7:47pm

7 June 2024 12:58pm

@bluevalhalla The latest EcoAssist version now includes the frame_number in the video detections.

{

"images": [

{

"file": "animal_2.mp4",

"detections": [

{

"category": "1",

"conf": 0.975,

"bbox": [

0.2609,

0.4944,

0.5531,

0.4194

],

"frame_number": 168 # <- NEW

}

]

}

],8 June 2024 2:08am

@pvlun Thank you for the pointer to keep the frame level detections. I'll give it a try next week.

@dmorris Thanks for the description of MDs video processing. I didn't even realize MD had video processing. I was extracting frames with ffmpeg and running those through MD as images. I don't typically run every frame. I'm usually in the 1-5 fps range. I should have said I was looking for the frame level details for the all the extracted frames (as opposed to all frames).

€4,000 travel grants for insect monitoring an AI

6 June 2024 4:49pm

looking for help

5 June 2024 9:38am

5 June 2024 4:19pm

Hi Agripina!

I have always used Browning, and I have found the basic models to be perfectly adequate, using only 6 AA batteries (not 8 like the majority of camera traps). Now I am using the model BTC-8E, which has been a great success due to its excellent definition.

I am from Chile, and I know of a company that imports them. Perhaps you should look for someone in your country that does the same, because importation and customs duties are often a headache.

I recommend you to visit trailcampro.com to read reviews from customers on different brands and models of camera traps.

I hope this information is helpful

5 June 2024 8:32pm

Hii Carolina

Thank you so much for the usefull information.

5 June 2024 8:34pm

Hi Alex

Thank you so much for the imput 🙏

Technical and Client Management Specialist - Wildlife Insights

5 June 2024 7:58pm

New WildLabs Funding & Finance group

5 June 2024 3:24pm

5 June 2024 4:14pm

6 June 2024 1:38am

6 June 2024 4:16am

Time drift in old Bushnell

24 May 2024 7:41am

31 May 2024 12:21pm

Depending on how much drift there is it may be a fixed offset caused by the timer not restarting until you have finished puttin gin al the settings. You set the time, then do all the the other settings for a couple of minutes, then exit settings and the timer starts from the time you set, in other words wto minute slow. The apparent drift will be short and fairly consistent, and will not increase with time (it is a bias). The solution is to leave the time setting to last and exit set p immediately after you enter the time.

If it is genuine drift then you can correct for it to an extent by noting the time on the camera and on an accurate timepiece when you retrieve the images. If you want to get fancy you can take an image of a GPS screen with the time on it, and compare it to the time stamp on the image.

Share Your Work in a Conservation Technology Video

17 May 2024 9:06pm

4th International Workshop onCamera Traps, AI, and Ecology

9 May 2024 1:00pm

Using drones and camtraps to find sloths in the canopy

18 July 2023 7:39pm

3 May 2024 6:48pm

Thank you for the tip, Eve! In fact, in the area where the foundation works, there clearly are dry seasons, the past few years much drier than normal, where trees loose their leaves a lot.

6 May 2024 4:29pm

Yes, if the canopy is sparse enough, you can see through the canopy with TIR what you cannot see in the RGB. We had tested with large mammals like rhinos and elephants that we could not see at all with the RGB under a semi-sparse canopy but were very clearly visible in TIR. It was actually quite surprising how easily we could detect the mammals under the canopy. It's likely similar for mid-sized mammals that live in the canopy that those drier seasons will be much easier to detect, although we did not test small mammals for visibility through the seasons. Other research has and there are a number of studies on primates now.

I did quite a bit of flying above the canopy, and did not have many problems. It's just a matter of always flying bit higher than the canopy. There are built in crash avoidance mechanisms in the drones themselves for safety so they do not crash, although they do get confused with a very brancy understory. They often miss smaller branches.If you look in the specifications of the particular UAV you will see they do not perform well with certain understories, so there is a chance of crashing. The same with telephone wires or other infrastructure that you have to be careful about.

Also, it's good practice to always be able to see the drone, line-of-sight, which is actually a requirement for flight operations in many countries. Although you may be able to get around it by being in a tower or being in an open area.

Some studies have used AI classifiers and interesting frameworks to discuss full or partial detections, sometimes it is unknown if it is the animal of interest. I would carefully plan any fieldwork around the seasons and make sure to get any of your paperwork approved well before the months of the dry season. It's going to be your best chance to detect them.

7 May 2024 1:49am

Thank you for elaborating, @evebohnett ! And for the heads ups!

Travel grants for insect monitoring an AI

3 May 2024 5:20pm

The Inventory User Guide

1 May 2024 12:46pm

Introducing The Inventory!

1 May 2024 12:46pm

2 May 2024 3:08pm

3 May 2024 5:33pm

17 May 2024 7:29am

Hiring Chief Engineer at Conservation X Labs

1 May 2024 12:19pm

MegaDetector v5 release

20 June 2022 9:06pm

23 April 2024 9:43pm

Hi @dmorris,

might you have encountered this issue while working with Mega detector v5?

The conflict is caused by:pytorchwildlife 1.0.2.13 depends on torch==1.10.1pytorchwildlife 1.0.2.12 depends on torch==1.10.1pytorchwildlife 1.0.2.11 depends on torch==1.10.1

if yes what solution helped?

23 April 2024 10:38pm

I'm sorry, I don't use PyTorch-Wildlife; I recommend filing an issue on their repo. Good luck!

23 April 2024 10:38pm

[oops, the same reply got submitted twice and there doesn't seem to be a "delete" button]

Pytorch-Wildlife: A Collaborative Deep Learning Framework for Conservation (v1.0)

21 February 2024 10:30pm

26 February 2024 11:58pm

This is great, thank you so much @zhongqimiao ! I will check it out and looking forward for the upcoming tutorial!

17 April 2024 11:07am

Hi everyone! @zhongqimiao was kind enough to join Variety Hour last month to talk more about Pytorch-Wildlife, so the recording might be of interest to folks in this thread. Catch up here:

23 April 2024 9:48pm

Hi @zhongqimiao ,

Might you have faced such an issue while using mega detector

The conflict is caused by:pytorchwildlife 1.0.2.13 depends on torch==1.10.1pytorchwildlife 1.0.2.12 depends on torch==1.10.1pytorchwildlife 1.0.2.11 depends on torch==1.10.1

if yes how did you solve it, or might you have any ideas?

torch 1.10.1 doesn't seem to exist

Ecologist Postdoctoral Research Fellow

23 April 2024 4:32pm

Program Manager: Integrating movement and camera trap data with international conservation policy

22 April 2024 10:16pm

Postdoc: Biologging & Camera Trap Data Integration

22 April 2024 10:10pm

Newt belly pattern for picture-matching

18 April 2024 3:57pm

19 April 2024 10:31am

19 April 2024 4:14pm

Hi Robin this is a great idea! Have been thinking about approaching the community for quiz questions. Will reach out to Xavier to ask if we can use it or if he wishes to run it on next Wednesdays VH

22 April 2024 11:27am

Thanks, and that's a match!

All these pictures are from a lab experiment and formated with AmphIdent. We took weekly belly pictures of several larvae. The aim of this google form is to validate (by comparing answers with the lab reference image base) picture-matching by human is reliable although the change of pattern can be verry quick at this life stage.

If validated there will be the need to improve existing pattern-matching solutions for in situ observations of larvae, for instance with ML and/or Human Learning (thanks Zooniverse)...

WILDLABS AWARDS 2024 - No-code custom AI for camera trap species classification

5 April 2024 7:00pm

10 April 2024 3:55am

Happy to explain for sure. By Timelapse I mean images taken every 15 minutes, and sometimes the same seals (anywhere from 1 to 70 individuals) were in the image for many consecutive images.

17 April 2024 5:53pm

Got it. We should definitely be able to handle those images. That said, if you're just looking for counts, then I'd recommend running Megadetector which is an object detection model and outputs a bounding box around each animal.

21 April 2024 5:19pm

Hi, this is pretty interesting to me. I plan to fly a drone over wild areas and look for invasive species incursions. So feral hogs are especially bad, but in the Everglades there is a big invasion of huge snakes. In various areas there are big herds of wild horses that will eat themselves out of habitat also, just to name a few examples. Actually the data would probably be useful in looking for invasive weeds, that is not my focus but the government of Canada is thinking about it.

Does your research focus on photos, or can you analyze LIDAR? I don't really know what emitters are available to fly over an area, or which beam type would be best for each animal type. I know that some drones carry a LIDAR besides a camera for example. Maybe a thermal camera would be best to fly at night.

Faces, Flukes, Fins and Flanks: How multispecies re-ID models are transforming Wild Me's work

17 April 2024 11:10am

Camera Trap storage and analyzing tools

16 April 2024 5:15pm

16 April 2024 7:17pm

I don't have an easy solution or a specific recommendation, but I try to track all the systems that do at least one of those things here:

https://agentmorris.github.io/camera-trap-ml-survey/#camera-trap-systems-using-ml

That's a list of camera trap analysis / data management systems that use AI in some way, but in practice, just about every system available now uses AI in some way, so it's a de facto list of tools you might want to at least browse.

AFAIK there are very few tools that are all of (1) a data management system, (2) an image review platform, and (3) an offline tool. If "no Internet access" still allows for access to a local network (e.g. WiFi within the ranger station), Camelot is a good starting point; it's designed to have an image database running on a local network. TRAPPER has a lot of the same properties, and @ptynecki (who works on TRAPPER) is active here on WILDLABS.

Ease of use is in the eye of the beholder, but I think what you'll find is that any system that has to actually deal with shared storage will require IT expertise to configure, but systems like Camelot and TRAPPER should be very easy to use from the perspective of the typical users who are storing and reviewing images every day.

Let us know what you decide!

17 April 2024 1:55am

Can't beat Dan's list!

I would just add that if you're interested in broader protected area management, platforms like EarthRanger and SMART are amazing, and can integrate with camera-trapping (amongst other) platforms.

Starting a Conservation Technology Career with Vainess Laizer

Esther Githinji

Esther Githinji

16 April 2024 1:34pm

Using the multivariate Hawkes process to study interactions between multiple species from camera trap data

15 April 2024 3:00pm

"We present the multivariate Hawkes process (MHP) and show how it can be used to analyze interactions between several species using camera trap data."

Research Assistant, Lion Landscapes in Nanyuki

11 April 2024 9:49am

Comparing local ecological knowledge with camera trap data to study mammal occurrence in anthropogenic landscapes of the Garden Route Biosphere Reserve

9 April 2024 7:09pm

Combining camera traps and online surveys provided a more comprehensive understanding of mammal communities in anthropogenic landscapes, increasing both spatial coverage and the number of species sightings.

Online training workshop: camera trap distance sampling, 27-31 May 2024.

6 April 2024 4:00am

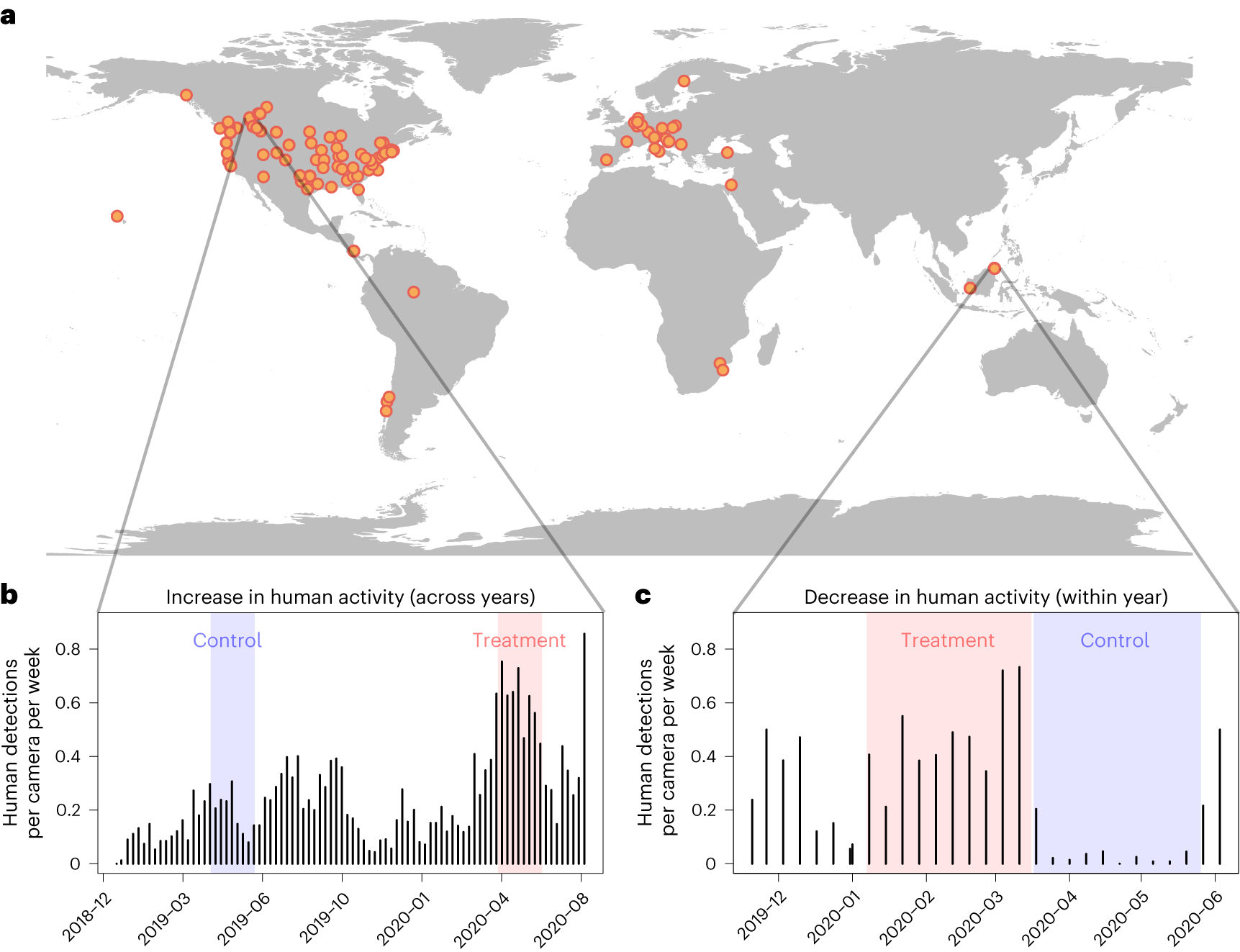

Mammal responses to global changes in human activity vary by trophic group and landscape

3 April 2024 4:52pm

These researchers used camera trapping as a lens to view mammal responses to changes in human activity during the COVID-19 pandemic.

EcoAssist - Free AI models for camera traps photos identification

3 April 2024 7:16am

Blind Spots in Conservation Tech Management in Remote Landscapes: Seeking Your Input

20 March 2024 10:51am

22 March 2024 9:48am

Hi @lucianofoglia

Thanks for sharing your thoughts with the community. What you've touched on resonates with a number of users and developers (looking at you @Rob_Appleby) who share similar concerns and are keen to address these issues.

As a beliver in open sourcing conservation technologies, to mitigate issues you've noted (maintenance of technologies / solutions, repairability, technical assistance to name but a few), really the only way to achieve this in my eyes is through the promotion of openness to enable a wide range of both technical and non-technical users to form the pool of skills needed to react to what you have stated. If they can repair a device, or modify it easily, we can solve the waste issue and promote reusability, but first they need access to achieve this and commerical companies typically shy away from releasing designs to protect against their IP that they keep in house to sell devices / solutions.

I would think for an organisation to achieve the same the community would need to help manufacturers and developers open and share hardware designs, software, repairability guides etc, but the reality today is as you have described.

One interesting conversation is around a kitemark, i.e a stamp of approval similar to the Open Source Hardware Association's OSHWA Certification), but as it's not always hardware related, the kitemark could cover repairability (making enclosure designs open access, or levels of openness to start to address the issue). Have a look at https://certification.oshwa.org/ for more info. I spent some time discussing an Open IoT Kitemark with http://www.designswarm.com/ back in 2020 with similar values as you have described - https://iot.london/openiot/

You may want to talk more about this at the upcoming Conservation Optimism Summit too.

Happy to join you on your journey :)

Alasdair (Arribada)

30 March 2024 3:57pm

Hi @Alasdair

Great to hear from you! Thanks for the comment and for those very useful links (very interesting). And for letting @Rob_Appleby know. I can't wait to hear from her.

Open source is my preference as well. And it's a good idea. But, already developing the tech in house is a step ahead from what would be the basic functional application of an organization that could manage the tech for a whole country/region.

I have witnessed sometime how tech have not added much to the efficiency of local teams but instead being an tool to promote the work of NGOs. And because of that then innovative technologies are not developed much further that a mere donation (from the local team's perspective). But for that tech to prove efficient, a lot more work on the field have to be done after. The help of people with expertise in the front line with lots of time to dedicate to the cause is essential (this proves too expensive for local NGOs and rarely this aspect is consider).

I imagine this is something that needs to come from the side closer to the donors and International NGOs. Ideally only equipment can be lend within a subscription model and not just donated without accountability on how that tech is use. Effectively the resources can be distributed strategically over many projects. Allowing to tech to be repurposed.

Sorry that I step down the technical talk, the thing is that sometimes the simplest things can make the most impact.

It would be good to know if any in the community that have spent considerable time working in conservation in remote regions, and have observed similar trends.

Thanks! Luciano

6 June 2024 11:52pm

Answering Peter's question to me above, about how MegaDetector's video processing stuff decides which detection to include in the video-level output file:

But ditto what Peter said, it sounds like you actually want the frame-level output file, and Peter gave you a tip about how to get that.

Also FWIW you will almost never want to process every frame of a video, nor will you likely want to train your models on every frame of a video; you're not getting a lot of new information from adjacent frames. Typically when MegaDetector users process videos, I advise sampling videos down to ~3fps, which is usually every 10th frame.