Webinar: Citizen Science Online

SciStarter

SciStarter

26 March 2020 12:00am

WILDLABS Tech Hub: Poreprint

26 March 2020 12:00am

Prior work on Bird Flock identification

22 March 2020 9:44am

24 March 2020 3:49am

Just guessing but I don't think it will make much of a difference, individual or flock. The spectrogramme will look much the same, and I think that is used as the input vector to the CNN. If so then I would expect the model will be quite tolerant of flock size. Just spitballing here though.

24 March 2020 7:23am

Hi Harold!

Great to know you are in the domain. To be honest my analysis so far indicates that when conducting a DSP approach on the spectrum, smoothing via convolution becomes an issue? Basically, the raw spectrum is too jagged to match, so one convolves it to smooth it, but then one just gets a generic "noise"-shaped spectrum. I also have variances in sampled spectra from the same source recording? I am using an fs=44100 and a spectrum 0 - 64kHz initially, or though I tried to filter from 100 - 9k with little success?

My design outline is: I need to identify the presence of a flock of a certain species of avians, I need to know when the flock is not present, and I need to distinguish the presence of other flocks of birds, not to identify them, but they are sometimes similar in size and possibly, therefore, call range? A sort of "We - Not We" approach?

I am comparing the gestalt sound, not individual calls?

Plus: I am using a Rapsberry Pi for the Fog Node currently, but see that I can use my Arduino Uno for TinyML from the examples which use a Nano? I am interested in the power-saving, but need a robust microphone rig, which I currently get via usb?

I will checkout your tutorial, many thanks!

Tally ho!

Andrew.

Virtual Field Trip: Conservation Technology with Shah Selbe

Shah Selbe

Shah Selbe

24 March 2020 12:00am

Online Workshop: Conservation Technology

Hack the Poacher

Hack the Poacher

23 March 2020 12:00am

Mobile App for Illegal Ivory Sales

22 March 2020 10:56pm

Illegal Ivory Sales on the Internet App

22 March 2020 10:52pm

Esri - Mapping to Save our Planet's Biodiversity

19 March 2020 10:46am

Technology Showroom of Artificial Intelligence (AI) aided Elephant Early Warning Systems

6 March 2020 6:09pm

19 March 2020 9:15am

Hi @Tim+Vedanayagam

Thank you for posting this. I'd be happy to contribute to the thermal sensing work under way. Can you confirm - have you built a thermal AI model and trained / labelled data for a particular camera?

We have been training a model for low cost (Lepton 3.5) thermal cameras via a challenge with WWF / Wildlabs and have 30,000 labelled images as our training dataset of Asian elephants. We're focusing on Deeplabel and YOLO with a plan to port to Tensorflow and it will be open source, so applicable for others to use and adopt in their early warning systems that use thermal.

More info here - https://www.zsl.org/blogs/conservation/zsl-whipsnade-zoo-becomes-a-space-for-high-tech-wild-elephant-conservation

Kind regards,

Alasdair

Machine learning fish monitoring and the seafood sector

17 March 2020 3:29pm

17 March 2020 7:58pm

Do the people approaching you have defined porblem statements or use cases? That's been one of the biggest challenges in scaling high-tech fisheries monitoring from either the public or private side. Unless there's a mandate to use it (which there is in Australia and the EU) the ROI is usually too low for individuals or companies to invest in it, and the potential markets are too small. Check out this CEA/TNC report for more scoping. http://tnc.org/emreport

18 March 2020 12:48pm

Thanks Kate - that's really helpful. The company in question are investors in an emerging high-end aquaculture venture and I assume their interest is around utilising individual fish tracking to drive greater efficiency i.e. to adjust feed inputs, estimate growth rates, detect disease etc. all of which seems to be the intention of the Tidal Project. I'll get back to them with more questions and make some onward connections. If anyone else in the community has any linkages - please drop me a line on here!

WILDLABS Virtual Workshop Recording: Running Engaging Events on Zoom

18 March 2020 12:00am

Enter the Zooniverse: Try Citizen Science for Yourself!

Ellie Warren

Ellie Warren

18 March 2020 12:00am

Webinar: IIED Community-Based Approaches to Tackling Poaching and Illegal Wildlife Trade

IIED

IIED

17 March 2020 12:00am

Tutorial: Train a TinyML Model That Can Recognize Sounds Using Only 23 kB of RAM

Daniel Situnayake

Daniel Situnayake

16 March 2020 12:00am

Able to Provide Movement Detection Software For Live Feed Video

16 January 2020 5:15am

11 March 2020 3:22am

Hi Ruth,

Thanks for your reply and the suggestion.

I contacted air shepherd and a few similar groups a few moths ago and they were all saying they are not interested due to the difficult drone regulations. Hopefully the regulations are not as arduous in Zimbabwe?

Please don't hesitate to ask if there is any technical information or assistance that I can provide to help with your project,

Jessi Hargrave

15 March 2020 5:15pm

Hi Jessi,

I can check on the regulations for the specific area that is planned for a wildlife sanctuary in Zimbabwe. What altitude does the drone need to operate in? Does it require communications with any ground-based facility?

Ruth

15 March 2020 11:40pm

Hi Ruth,

Thanks for your email.

Essentially the software is only an advantage if the drones are being flown at altitudes that provide a wide area and less visible detail (i.e. if the operator flies at 3000ft (instead of 1000ft) it will cover 9x the area and see very small detail (which the software can pick up but the human eye may miss). In addition the software needs video feed from a computer (it sort of overlays the video) so it is only useful for drones that are able to relay decent quality video feed to a ground control station where an operator is viewing it live.

I have been advised that the drones that this is most useful for are likely to be at least 5kg (ie not the small toy ones that give video to a tablet).

I hope that helps? Please feel free to ask anything else and I will see what I can find out,

Jessi

Reading tips on De-extinction/Regenesis

12 March 2020 4:10pm

Success recording bees using AudioMoth

7 July 2019 6:45pm

11 March 2020 11:16am

How can we learn more about your BEESWAX7 buzz identification and counting program, and discuss working together?

Testing an Early Warning System to Mitigate Human-Wildlife Conflict on the Bhutan-India Border

Aditya Gangadharan

Aditya Gangadharan

11 March 2020 12:00am

Announcing the iWildCam 2020 Camera Trap Kaggle Competition!

10 March 2020 7:08pm

Proximity Data - Analysis methods

10 March 2020 2:44pm

3 Ways Your Conservation Technology Could Become a Shiny Pile of Junk, and How to Avoid It

Aditya Gangadharan

Aditya Gangadharan

9 March 2020 12:00am

Project Advice: Average speed camera system

7 March 2020 6:26pm

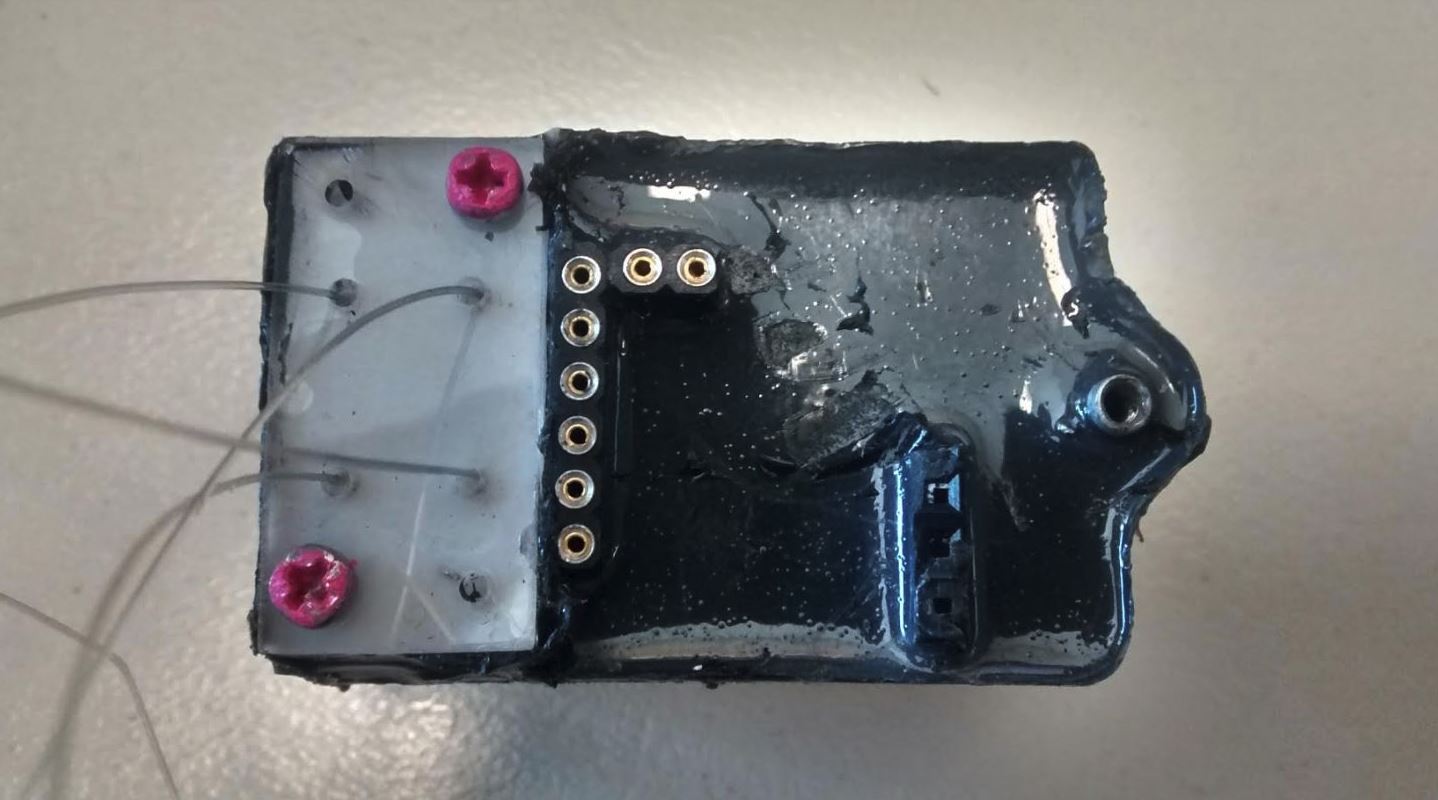

OpenCollar Update 1

6 March 2019 12:05pm

18 April 2019 3:51pm

Hi Jackson,

Attached a few images showing how we attach the koala drop-off to collar material. The first shows the bare nichrome-acrylic plate with the nylon line in-situ. There's also a picture of an actual koala drop-off with the line exiting the plate. Lastly, the triple overhand knots (repeated so the knots are doubled over and secure) tying the line through the collar material. Normally, we hide the nylon by splicing the collar material in half, tying the knots, and then gluing the collar material back together so no nylon is exposed.

Does all that make sense? Any questions just let me know.

Cheers,

Rob

19 April 2019 7:55am

also, we noticed our BoM was missing from GitHub, so we've added it now: https://github.com/Wild-Spy/OpenDrop/blob/master/Documentation/OpenDrop_BOM.xls

5 March 2020 4:44am

the drop off paper

https://besjournals.onlinelibrary.wiley.com/doi/10.1111/2041-210X.13231

Accepting Applications: ArcGIS Solutions for Protected Area Management

esri

esri

4 March 2020 12:00am

#Tech4Wildlife 2020 Photo Challenge In Review

4 March 2020 12:00am

sound loop device

3 March 2020 1:59am

Competition: Plastic Data Challenge

The Incubation Network

The Incubation Network

3 March 2020 12:00am

Call for Nominations: Tusk Conservation Awards

Tusk

Tusk

3 March 2020 12:00am

Open-Source Argos Developer's Kit / Tag

2 March 2020 10:27pm

How to add a salt water switch

28 February 2020 4:52pm

23 March 2020 9:01pm

Hi Andrew,

Dan here—I'm one of the authors of the TinyML book! I love your Withymbe project; I've previously done work involving embedded systems and insects, and it's interesting to hear about your plans for bird flocks.

As long as you have sufficient data, you should be able to identify different bird sounds and discern them from background noise. The TinyML book has a chapter that introduces the underlying techniques, and I'd also recommend taking a look at www.edgeimpulse.com - we've built a set of tools designed to make it easy to train these types of models.

We actually recently published a tutorial on Wildlabs about this very concept:

https://www.wildlabs.net/resources/case-studies/tutorial-train-tinyml-model-can-recognize-sounds-using-only-23-kb-ram

I'm always excited to learn about new applications; feel free to reach out if there's any way we can help. I'm [email protected].

Warmly,

Dan